If the frequency passes, we then append a tuple of the frequency and its maximum amplitude to the time_list. If the minimum is 2000 and the maximum is 2300, then most likely, it’s a continuation and we do not recognize it as a new note. For example at 349.228Hz (F4 Note), if the minimum amplitude found within the interval is 1300 and the maximum is 7600, it most likely is a new note. After that check, we copmare the minimum and the maximum amplitude of the frequency to determine if it’s a new note or a continuation of a previous note. Since MuseScore has higher-pitched notes louder than lower-pitched notes, we weight our threshold so high-pitched notes receive a bigger threshold. Once we leave the boundary of tempo ± leniency, we use a hill-climbing search staring from the lowest frequency to see each peak amplitude.Īfter finding the local peak amplitude (the frequencies below and after have lower amplitudes), we run it through a checker to see if the maximum amplitude is above the weighted threshold.

If the frame’s timestamp is within the tempo ± leniency, then we record the minimum and maximum amplitude for each frequency of the whole interval. 3s, and the leniency would make it search. 25s, offsetting it would make the middle. 03, the checker would find and consider the time intervals between. For example, if the song has a tempo of 120BPM, an offset of.

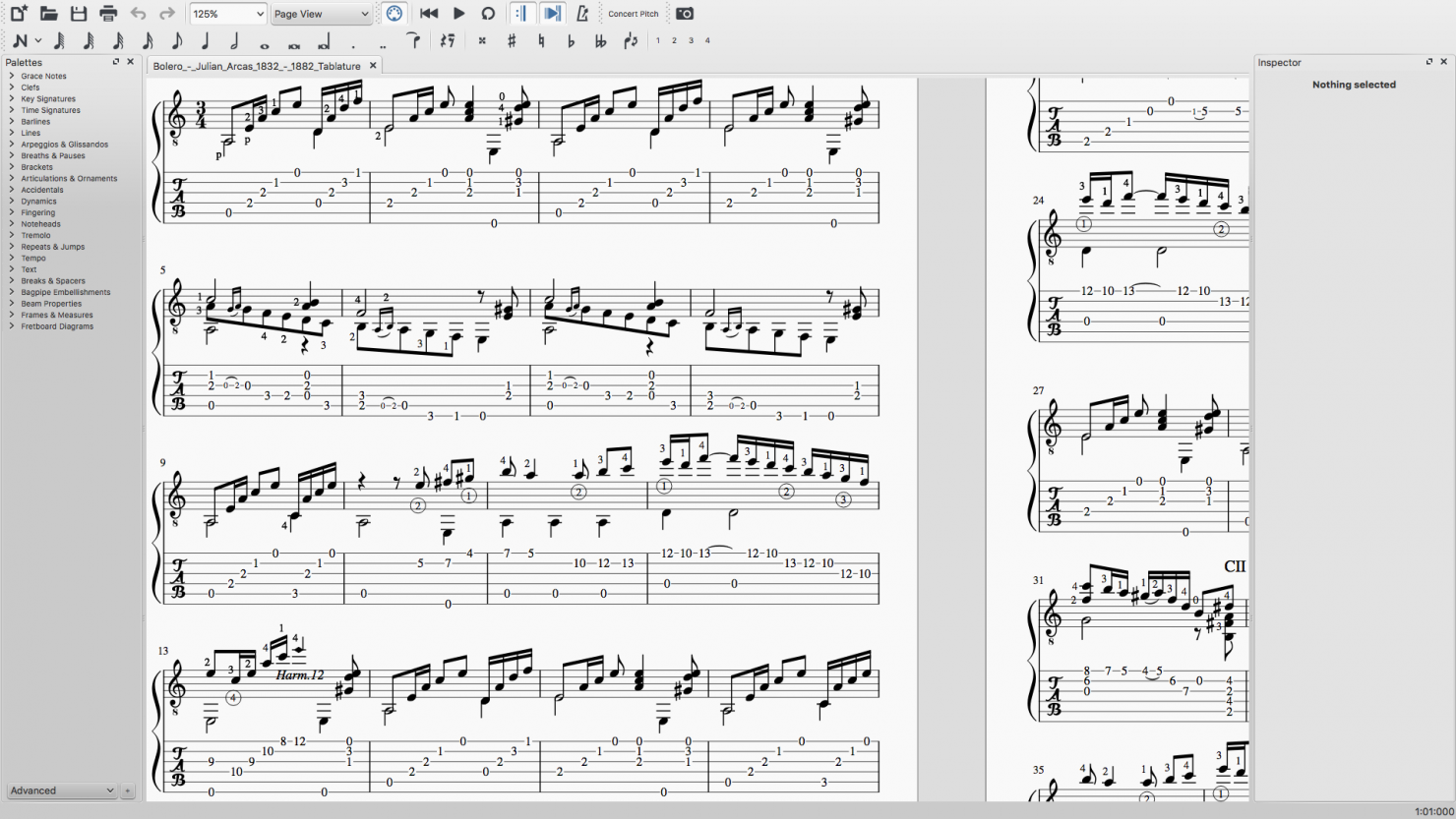

We check if we want to consider the time interval if it falls within the boundary of tempo ± leniency. After running the function, the function sets up the values, and iterates through all the frames. Once converted, we input it into our create_note_list function within the music_translator.py file.Īlongside the file_name, we also need to give the function the tempo (beats per minute), the threshold (how loud the amplitude must be to register as a note), the offset (how far away the song is from time 0 seconds), and leniency (how offbeat the song is from the tempo’s beat). wav.Īfter we give AnthemScore the files, we let AnthemScore’s neural network decode the amplitudes of each frequency sorted by. We used MuseScore 3 to make music and then export it as audio files, either MP3 or. Preprossessingįirst, we create audio files to feed AnthemScore and output. Finally, our best solution is a greedy search algorithm that currently searches for optimal choices per agent while removing previous agent’s decisions from domain list until the frequency matrix is empty. Next, we tried to randomly search through the domain space for improvement to our optimization function with a limited number of iterations to reach closer results in a shorter amount of time. Our naive solution is to brute force the entire domain space for solutions to our constraint, but by the large nature of this domain space this would not be optimal within regular time constraints. Second we must make sure each agent plays a different note, so each note variable in a given time t cannot have the same value. First we must minimize the distance between notes played by each single agent for every adjacent pair of notes with respect to time. Then, our variables would consist of a copy of the processed frequency list into a new matrix called N, where the variable N is played by the ith agent at time t.Įach variable’s domain depends on the list of notes in F for every given tįinally, there are two constraints needed. With our note frequencies from AnthemScore, we can then frame our problem as a constriant satisfaction problem.įirst we pre-process the outpint into a matrix F given an amplitude threshold.

#Anthemscore importing to musescore how to#

We want to simulate a MIDI-like contraption, and with AI, we can play songs without knowing how to play music as efficiently as possible. Our AI not only tries to play the song as close to the audio file as possible, but the AI will also try to achieve this goal with the fewest amount of steps it can find. The outputs will be the sounds from the noteblocks the AI touches and also a log that tells what the AI hit with the timestamp. CS 175: Project in AI (in Minecraft) Video Project Summaryįor our project, we created an orchestra of AI agents that are capable of listening to music and recreate a song in Minecraft by scheduling each other to hit the appropriate Minecraft noteblocks on time.